Self-managed LLMs: The Future of AI Sovereignty and Operational Efficiency

May 6, 2026

Self-Managed Mid-weight LLMs: The Future?

We have arrived at the moment that has been predicted for some time: the end of the flat-rate token model. For the last few years, the GenAI industry has been operating at a level of astronomical losses and under the false pretense that the cost of computation was irrelevant. It was fast, it was spectacular, but it was financially unsustainable.

The recent GitHub Copilot announcement confirms what many saw coming: the flat-rate model dies in June 2026. The shift to usage-based billing and cost multipliers of up to 27x for some models are a reality check. The use of agentic workflows in the cloud has become popular, and it has further decimated provider margins.

The Future Points Local (and Will Demand More Discipline)

Given this scenario, rethinking the consumption strategy seems to be the only reasonable way forward.

In my opinion, the future involves adopting an AI sovereignty strategy. This strategy should aim to delegate the vast majority of everyday tasks to mid-range models (20B-35B), where we have absolute control over the infrastructure and the price. This means avoiding any form of "vendor lock-in" and betting on flexible tools that allow for fine-grained segregation of tasks among multiple models (which is why I am a staunch supporter of alternatives like OpenCode or pi.dev over closed ecosystems like those of Anthropic or OpenAI).

This does not necessarily mean executing everything locally on the developer's laptop; it spans from inference servers in our VPC to services like Vertex AI with private endpoints. What is clear to me is that the frontier models should be reserved only for critical or extremely complex operations.

This is not just about cost optimization; the strategy directly connects to an operational and design decision. As generalist models become standardized, the true value lies in specializing them for specific tasks using proprietary data and context. Opting for local or self-managed models allows us to fine-tune them for specific use cases, maintain control, and adapt them continually. The goal is not to use an astronomically costly generalist model for everything, but to have multiple, specialized (and much lighter) models. In this scenario, the competitive advantage no longer comes from the model in itself, but from how effectively it solves concrete problems within your organization.

If one decides to pursue this strategy, there are some key factors to consider that will be critical and cause the most headaches: The importance of context (in terms of quality and quantity), fine-tuning, quality controls, and the shift in usage mentality.

Context, Fine-Tuning, Quality, and Usage Mentality

Context Management

If it's already critical when using frontier models, for our case, the importance is squared. The available window will be more limited, and the model's attention capacity and precision will be much more fragile. Quality will drop sharply if we saturate it.

In his article on Context Engineering, Ezequiel explains the concept of "Context Rot" in detail, drawing an analogy to the usage of RAM in the past (not so distant). It's not about forcing more information through brute force, but about applying Progressive Disclosure architectures and, especially, being very strict with the point he makes about Targeted Retrieval. We must provide only and exclusively the necessary, delimited, and controlled information for the task to ensure the model focuses its attention on what is strictly indispensable.

The Operational Advantage of Fine-Tuning

Knowing that context must be managed as if it were sacred, the ability to specialize models (even "light" ones, like Qwen 3.6 27b or Gemma 4 31b) gives us a clear operational advantage.

It will involve an initial investment in the training process, but specializing agents will help us drastically reduce the amount of context we need to provide for each specific operation. The intensive use of skills, rules, and long .md configuration files is fine if you have a broad context and its management is not critical, but in our situation, we want to minimize input tokens so the model can refine its precision. A model trained on your stack or the specific needs of the task it is going to execute has already learned the lesson.

Training this type of model is completely manageable today thanks to efficient parametric adaptation techniques like LoRA. In fact, platforms like Google Cloud's Vertex AI have standardized managed fine-tuning, offering these processes under usage-based billing models. In parallel, frameworks like NVIDIA NeMo allow for implementing these techniques with greater control over the infrastructure, while optimized libraries like Unsloth facilitate their efficient execution even in resource-limited environments.

Quality Control and Deterministic Tools

This is another concept that is already key in any scenario, but which increases its criticality with the use of non-frontier models.

As Jason Gorman argues in his series “The AI-Ready Software Developer”, the scalability of AI-assisted development depends on integrating models into deterministic workflows with strict feedback. In this approach, proposals are generated, but their validity is always verified through mechanisms like automated tests, continuous integration, or static analysis. This involves applying practices such as deriving tests from executable specifications (e.g., in Gherkin) or systematically reusing the results from tools like linters or test suites. The model proposes, the engineering system validates, restricts, corrects, and forces iteration.

The Change in Mentality

All of this leads to a paradigm shift compared to habitual agentic flows with cloud LLMs. Operating with local models requires working at a much lower level and greater human intervention due to the inherent limits of the models. Far from being an inconvenience, this constant engagement, in my opinion, is positive because it avoids the dangerous invitation to "detach" from the code that models like Opus 4.6 provoke so easily. The days of launching a prompt like "review the entire repo and propose this high-level change that involves a ton of modifications" are over. Forcing ourselves to break down the problem and guide the model returns control over the software to us.

Tinkering Locally

I have been experimenting for a few weeks with a local model configuration for my daily work. After multiple tests and ongoing experimentation, I have reached a functional strategy that mitigates some of the usual shortcomings of small models in limited execution environments.

My test environment is a MacBook Pro M4 with 48 GB of RAM. Although it is a powerful machine, running models around 30B parameters demands optimizing every available gigabyte of VRAM, especially when the KV cache starts to spiral when managing wide context windows.

Orchestration: llama.cpp and llama-swap

To serve the models, I rely on llama.cpp and, especially, on llama-swap. Why not Ollama? Well, using llama.cpp requires a bit more configuration, but you gain in optimization and efficiency.

The swap is the key component: it allows for on-demand model exchange. Instead of trying to load three models in parallel and saturate the memory, llama-swap manages the transparent loading and unloading in VRAM for my OpenCode instance. I will leave the repository here if you want to take a look; it is relatively simple and possibly has a lot of room for improvement.

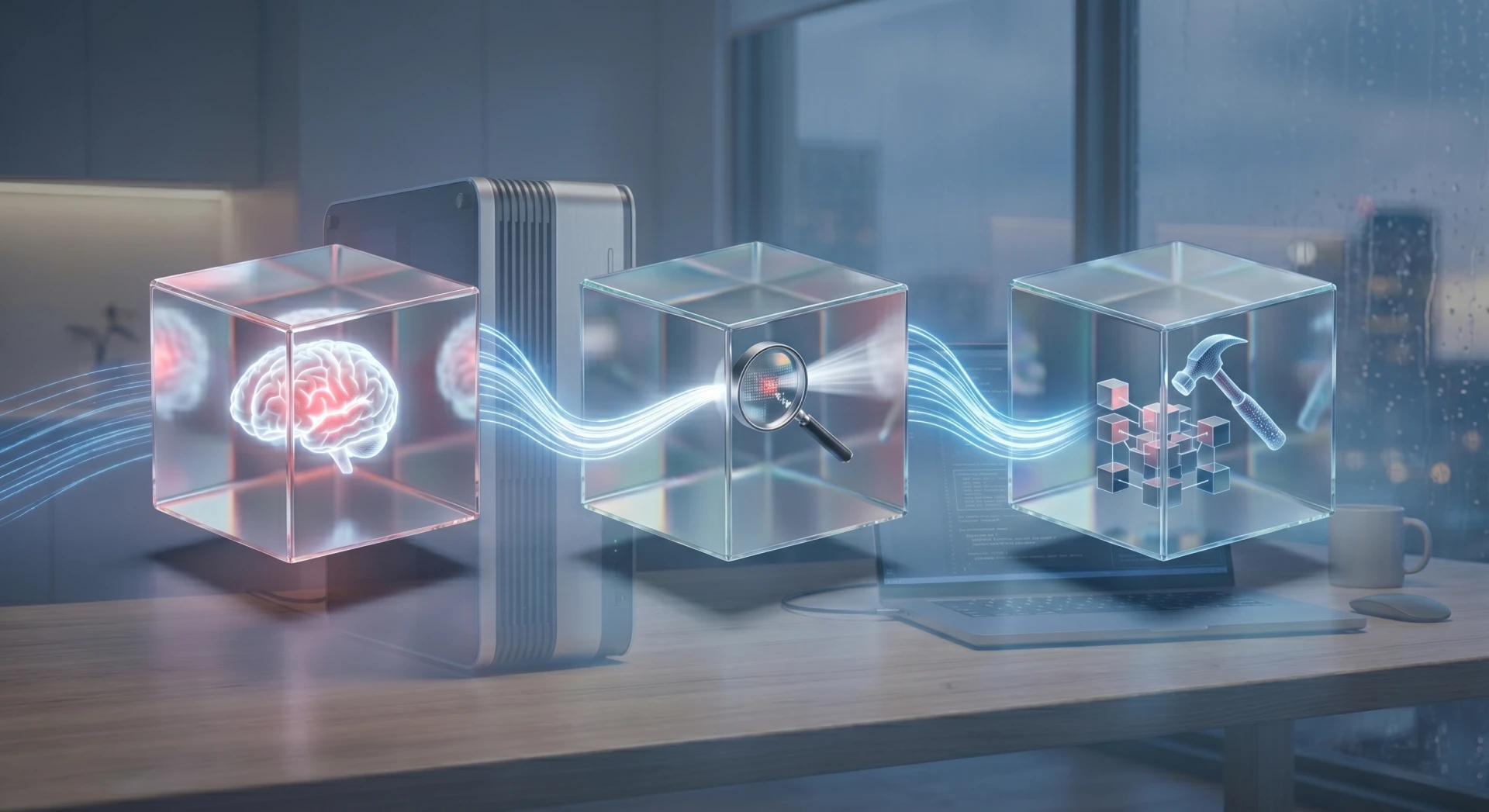

The 3-Layer Flow: Plan → Review → Build

I decided to add an intermediate step to the habitual "Plan" and "Build" flow:

-

Plan (DeepSeek R1 Distill 32b): A very imaginative model with deep reasoning capability. It is responsible for outlining the initial technical strategy

-

Review (Gemma 4 31b): DeepSeek can be "too" creative and, at times, unstructured in its output. This is where Gemma comes in—a much more pragmatic, fast, and strict model. Its function is to polish the plan, eliminate redundancies, and ensure the proposal is executable and technically solid.

Build (Qwen 3.6 27b): For pure code writing, Qwen 3.6 27b is currently the sweet spot of the mid-weight LMs. Its great advantage is “preserve_thinking”: it maintains the context of its reasoning chain between iterations. This allows it to understand why it is making certain implementation decisions without getting lost in complex agentic workflows, an area where Gemma 4 usually suffers more.

The usage model and the role of each agent are configured at the development tool level. In my case, I use my agent development configuration suite with OpenCode. Note that the skills & commands model is designed more for working with cloud models, and I have not yet optimized it for local use, but I do provide a base configuration for local use in the opencode.local.example.json example.

The Toll of Speed: Q5 vs Q4

Not everything is idyllic. The main pain point locally is the throughput, or token generation rate. I initially opted for Q5_K_M quantization for all three models, aiming for maximum fidelity. However, planning and review iterations could stretch for several minutes.

I am currently transitioning to Q4_K_M. While you sacrifice some theoretical precision, the gain in speed is notable. This is where the deterministic quality controls we discussed earlier must come into play: if the implementation deviates from the objective due to quantization, the system must force iteration.

It should be noted that this is the main trade-off of the purely local approach. If, instead of this, we choose to set up a private endpoint in the cloud for the team, we can abandon llama.cpp in favor of inference engines like vLLM served on GPUs. This allows us to run the models in native FP8 or INT4, sharing the KV Cache across the team and multiplying throughput without the bottlenecks of a laptop.

OpenCode as the Center of Everything

None of this would make sense without a vendor-agnostic tool. I use OpenCode because it doesn't tie me to a provider or a subscription. It allows me to combine different model sources and orchestrate these complex flows with total freedom. Furthermore, it is open-source and auditable, with all the advantages that entails.

If we believe the future is local or hybrid, OpenCode is, in my opinion, one of the main players in regaining sovereignty over our tools. In fact, I am working on configurations that go beyond pure development, such as this support assistant for Engineering Managers integrated with Jira and GitHub (a native adaptation for OpenCode, with personalized functionalities, based on Julio César Pérez's original repository for Claude: claude-em).

Reference Repositories

-

Local models setup: https://github.com/apenlor/llm-local-setup

-

OpenCode Expert Mode (dev): https://github.com/apenlor/opencode-expert-mode

-

OpenCode EM OS (Engineer Manager): https://github.com/apenlor/opencode-em-os

To sum up: More Engineering

Everything seems to indicate that the future of assisted development lies in relying on local or private cloud infrastructure. However, this transition demands a paradigm shift: we must shed the bad habits that have become ingrained in our way of working too quickly over the last few years.

Far from being a step backward, I sincerely believe it will be a purely positive change. We regain sovereignty, absolute control, and the privacy of our code. We reduce team cognitive fatigue: the effect of burn-out (also called "AI fatigue") decreases when the quantity of massive PRs generated by a frontier model is reduced, or when your focus is not constantly jumping from one point to another and you focus on a single problem. Furthermore, it reduces the risk of detachment and lack of control over the code.

Even on a macro level, the planet and our infrastructure will appreciate such a change in the usage model.

Personally, I am quite excited about the challenges this new stage brings. Optimizing LLMs, designing efficient validations, and orchestrating local flows is infinitely more stimulating, on a technical level, than the strategy of killing a fly with a cannon and burning tokens/computation as if there were no tomorrow.

I hope I'm right, and that this article doesn't age too poorly 🙂

Our latest news

Interested in learning more about how we are constantly adapting to the new digital frontier?

Insight

April 27, 2026

Remix with Rovo in Confluence: Transform Your Data into Visuals

Corporate news

April 21, 2026

We have integrated AMS Solutions to strengthen our capabilities in managed services and AI-assisted software development

Corporate news

March 27, 2026

Sngular Gen: Riding the Wave of the AI-Native Development Revolution

Insight

March 25, 2026

The smartest AI is the one that knows your business best